Into the SAE-verse

Introducing Sparse Autoencoders for mechanistic interpretability.

These are my personal notes in preparation for a reading group presentation at VinUni. They cover essential background on Sparse Autoencoders (SAEs), enough to understand the paper Finding the Translation Switch (Wu et al., AAAI 2026).

Slides of the presentation: Google Slides.

Residual Stream

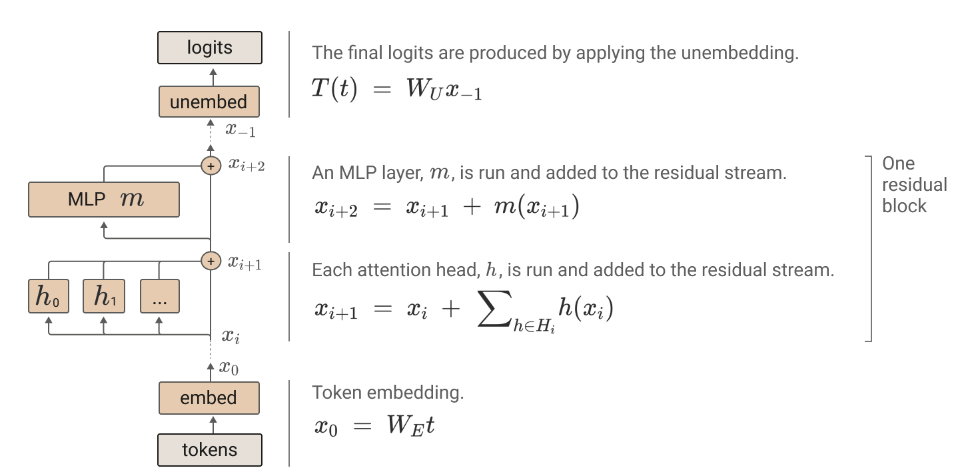

Internally, an LLM takes a sequence of embeddings and processes it layer by layer. In each layer, the input vector is passed through:

- a multi-head attention block (then added back to the residual stream)

- an MLP block (then added back to the residual stream)

The transformed vector is added back to the output of the previous layer via a residual connection. In other words, the input embedding vector becomes a running sum of each sub-layer’s outputs. You can think of the residual stream as the model’s “information highway”—information is read, processed, then written back.

After the final layer, the residual stream output is multiplied by the unembedding matrix to produce raw logits, which are softmax-ed into a probability distribution over the vocabulary.

Layer-wise residual stream activations are a major subject of interpretability work. The goal is often to find statements like “the model does X at layer Y.”

Polysemanticity

When we examine the activation vector of each layer’s residual stream, we see that some individual dimensions are more activated than others. In an idealized world, these dimensions would map cleanly 1-to-1 to human concepts—dimension 1023 fires for “dogs,” dimension 456 fires for “the color blue,” and so on.

However, if we try to find which tokens most frequently activate a given dimension, we quickly discover that dimensions activate on a wide variety of inputs. They don’t map to just one concept. This phenomenon is called polysemanticity, and it complicates interpretability efforts considerably.

We can’t simply say “neuron N at layer L does this and that” if that neuron fires on seemingly unrelated concepts like “skyscrapers OR lighthouses OR water towers.”

Superposition

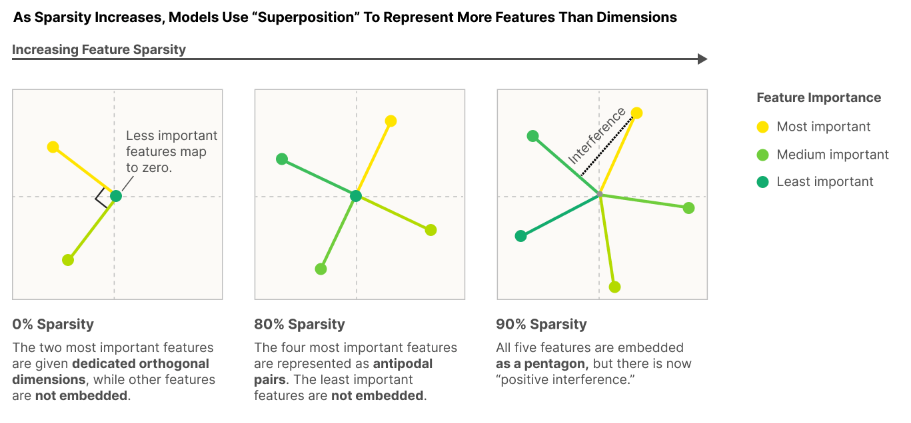

A hypothesized mechanism explaining polysemanticity is superposition. The idea: a model needs to learn more concepts than it has dimensions, so it “packs” multiple concepts into overlapping combinations of dimensions.

Think of “features” as the interpretable properties of the input we observe neurons (or embedding directions) responding to. Because the number of concepts far exceeds the number of dimensions, LLMs learn to encode features as combinations of dimensions rather than dedicating one dimension per concept.

During a forward pass, the model uses a (perhaps linear) combination of dimensions to represent a concept. This is efficient for the model but inconvenient for us—we can’t point to a single dimension and get a clean interpretation.

For a deeper treatment, see Elhage et al.’s Toy Models of Superposition (2022). Superposition is not the only cause of polysemanticity, see: Superposition is not “just” neuron polysemanticity (blog post, 2024).

Into the SAE-verse

Sparse Autoencoders learn monosemantic features in the activation space

Ideally, we want some unit where an individual component corresponds cleanly to just one human concept. That way, we can point to a single component and say “feature 967 of layer 12 is responsible for X.”

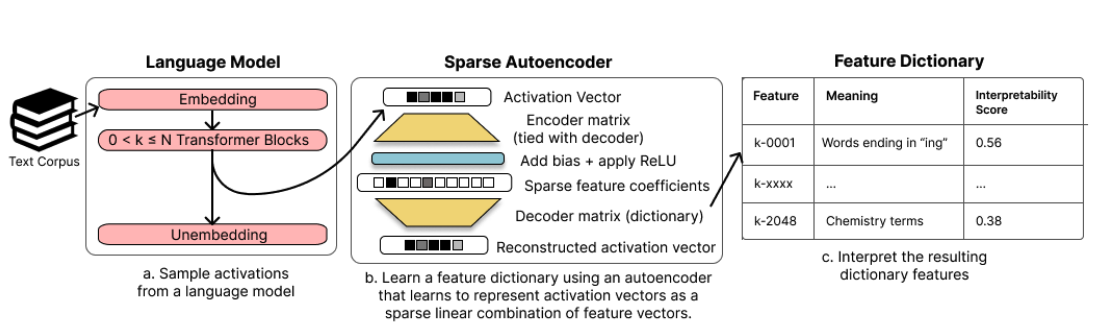

One popular method for achieving monosemanticity is training Sparse Autoencoders (SAEs) on residual stream activations. An SAE decomposes a densely activated residual stream vector into a sparsely activated hidden vector.

SAE Architecture and Training

The architecture is simple:

- Encoder: A single linear layer followed by a nonlinear activation (often ReLU or JumpReLU)

- Decoder: A single linear layer that reconstructs the original activation

Formally:

\[h(x) = f(W_{\text{enc}} \cdot x + b_{\text{enc}})\] \[\hat{x} = W_{\text{dec}} \cdot h(x) + b_{\text{dec}}\]The hidden state dimension ($d_{\text{sae}}$) is typically chosen to be several times larger than the model embedding dimension ($d_{\text{model}}$) – common expansion factors are 4×, 8×, or even 64×. This overcomplete basis combined with a sparsity constraint (L1 penalty or Top-K activation) means we get many possible features but only a few active at any time, yielding cleaner, more interpretable decompositions.

The training objective combines reconstruction loss with a sparsity term:

\[\mathcal{L}(x) = \|x - \hat{x}\|_2^2 + \alpha \|h(x)\|_1\]

Pre-trained SAEs

Training SAEs is computationally expensive—you need to pass billions of tokens through the LLM and collect activations at every layer. For this reason, most papers use pre-trained SAEs. Gemma Scope (by DeepMind) provides pre-trained SAEs on every layer of Gemma models; Llama Scope offers similar tooling for Llama.

Interpreting SAE Features

Once we have a trained SAE, interpretation proceeds in two steps: finding what activates a feature, and understanding what that feature “does” to the model’s representations.

Finding What Activates a Feature

The standard approach is to collect max-activating examples. You run a large corpus through the LLM, collect residual stream activations at the layer of interest, pass them through the SAE encoder, and record which inputs produce the highest activation values for each feature position.

Say you’re investigating feature 476 at layer 7. You look at the top 50 tokens (and their surrounding contexts) that most strongly activate position 476 in the SAE hidden vector. If those tokens are all things like “dog,” “puppy,” “canine,” “bark,” and “leash,” you have a reasonable hypothesis: feature 476 responds to dog-related concepts.

Sometimes the pattern is obvious. Sometimes it’s subtle—maybe the feature fires on “the last token before a period in formal writing” or “numbers that appear in financial contexts.” Sometimes the pattern is… unclear, and the feature remains uninterpretable.

The Decoder Column as a “Direction”

When we say feature 476 represents “dogs,” what we mean more precisely is: the 476th column of the decoder weight matrix $W_{\text{dec}}$ is a vector in residual stream space that the SAE has learned to associate with dog-related inputs.

You can think of this column as a direction in the model’s representation space. When the model processes dog-related content, the residual stream activation has a component pointing along this direction. The SAE encoder detects this and produces a high activation at position 476. The decoder then reconstructs that component by adding (activation $\times$ direction) back to the output.

The magnitude of the activation tells you “how much” of that concept is present in the current representation. A strong activation on the dog feature might indicate the token is explicitly about dogs; a weak activation might indicate a more tangential connection.

From Activation to Intervention

This directional interpretation is what makes SAE features useful for causal analysis. If feature 476 truly represents “dog-ness,” then artificially increasing its activation should push the model’s internal state further in the dog direction—potentially steering downstream behavior toward dog-related outputs. Conversely, zeroing out the activation should remove that component from the representation.

SAE Limitations

SAEs assume features are linear directions in activation space—that you can cleanly add or subtract “dog-ness” or “translation-ness” as a single vector. This may not always hold. Some concepts might be represented nonlinearly in ways a linear decoder can’t capture. SAE features are also learned approximations, not ground truth. The decomposition depends on training data, sparsity penalty, expansion factor, and other hyperparameters. Different settings yield different feature dictionaries, and you lose information to reconstruction error.

More fundamentally, SAEs give you a snapshot of one layer at one token position. They don’t capture how information flows across layers or how features compose over the forward pass. If you want to understand circuits—where early features feed into later ones to produce complex behavior—SAEs alone won’t get you there. They tell you what features are present; understanding how those features interact requires additional machinery.

References

- Elhage, N., et al. A Mathematical Framework for Transformer Circuits. Anthropic, 2021.

- Mu, J. and Andreas, J. Compositional Explanations of Neurons. NeurIPS, 2020.

- Elhage, N., et al. Toy Models of Superposition. Anthropic, 2022.

- Cunningham, H., et al. Sparse Autoencoders Find Highly Interpretable Features in Language Models. ICLR, 2024.

- Xu, Z., et al. A Survey on Sparse Autoencoders: Interpreting the Internal Mechanisms of Large Language Models. arXiv, 2025.

- Lieberum, T., et al. Gemma Scope. Google DeepMind, 2024.

- He, Z., et al. Llama Scope. OpenMOSS, 2024.

- Jansen, L. Superposition is not “just” neuron polysemanticity. Alignment Forum, 2024.

- Wu, Z., et al. Finding the Translation Switch: Discovering and Exploiting the Task-Initiation Features in LLMs. AAAI, 2026.